Beyond Legal #21: The engineer who built exactly what he was asked

Data protection by design? She wrote the DPIA. I just built what I was asked. — Sam R., software engineer, 2025

There is a sentence I have heard in various forms in a few companies, and it’s not particularly comfortable on the ears. “I just built what I was asked.” It is not said out of spite because it is often said in a sincere way. And that is the problem.

Sam’s story #

Sam is a mid-level software engineer at a fast-growing SaaS company that operates across the EU. He writes clean code, ships on time, and has a reputation for being low-drama during sprint planning. He does not think much about data protection — not because he doesn’t care, but because the data protection team rarely engaged with him.

The Data Protection Leader — Claire — had been appointed two years ago. Thorough, many privacy certifications, and entirely focused on the legal side of her role. Lawful bases, data subject rights procedures, records of processing activities. She was good at that work. But she had made an invalid assumption that would eventually cost her the job: that data protection by design and by default was something she could briefly mention in a paragraph, and that engineering would handle the rest.

The DPIA that nobody finished reading #

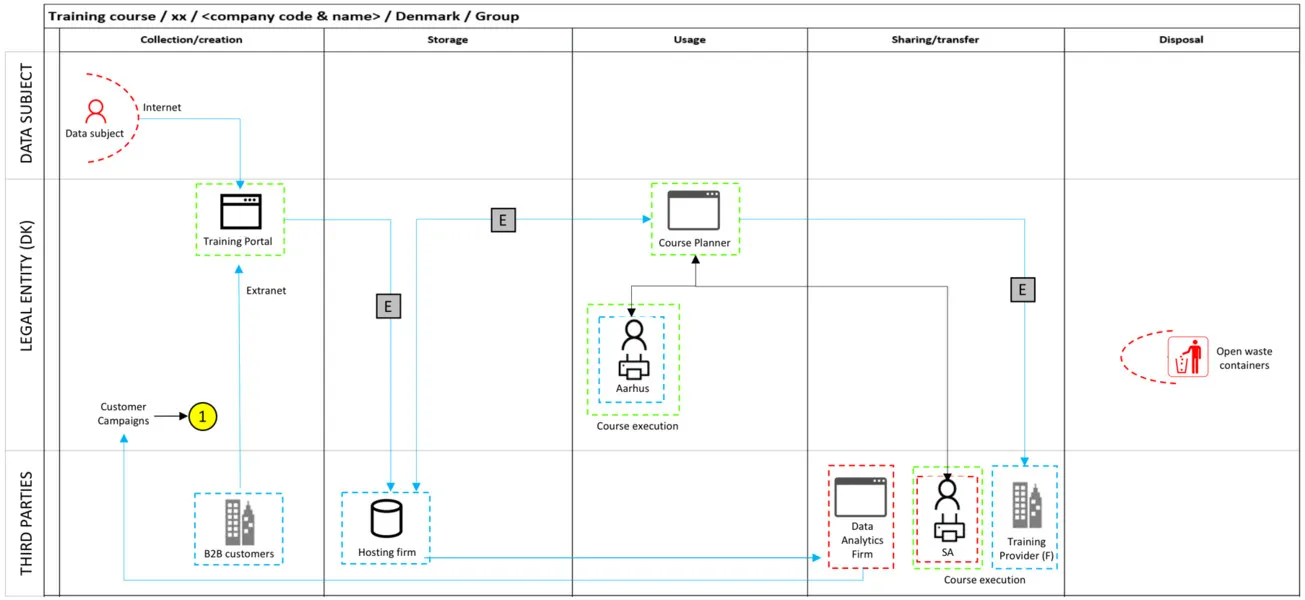

When the product team began building a new behavioural analytics feature, Claire did a DPIA. At first glance, it was well-structured. It identified risks, though they seemed quite generic and high level. It made clear recommendations — pseudonymisation of user identifiers, configurable retention periods, granular consent mechanisms built into the user interface.

It was shared with the engineering team as a PDF attachment in a ticket description.

Sam received the ticket. He read the acceptance criteria at the top. He struggled with the DPIA. He had never been involved in any DPIA process, never received contextual education/training, or what the recommendations meant in terms of what he needed to build. The ticket said: “Build analytics event tracking for the onboarding flow.” So he did.

The feature shipped. It tracked broadly and retained indefinitely. The consent mechanism was a single toggle buried in account settings, defaulted to on. Tens of thousands of data subjects were being processed in a way that did not reflect the intended design.

What the Supervisory Authority found #

A data subject complaint triggered an inquiry. Investigators found a huge gap between what the DPIA recommended and what was actually built — enough to constitute a failure of accountability under GDPR. The company could not demonstrate that data protection had been integrated by design and by default, as required under Article 25.

The case caught the attention of the media, and suddenly the company was getting unwanted attention.

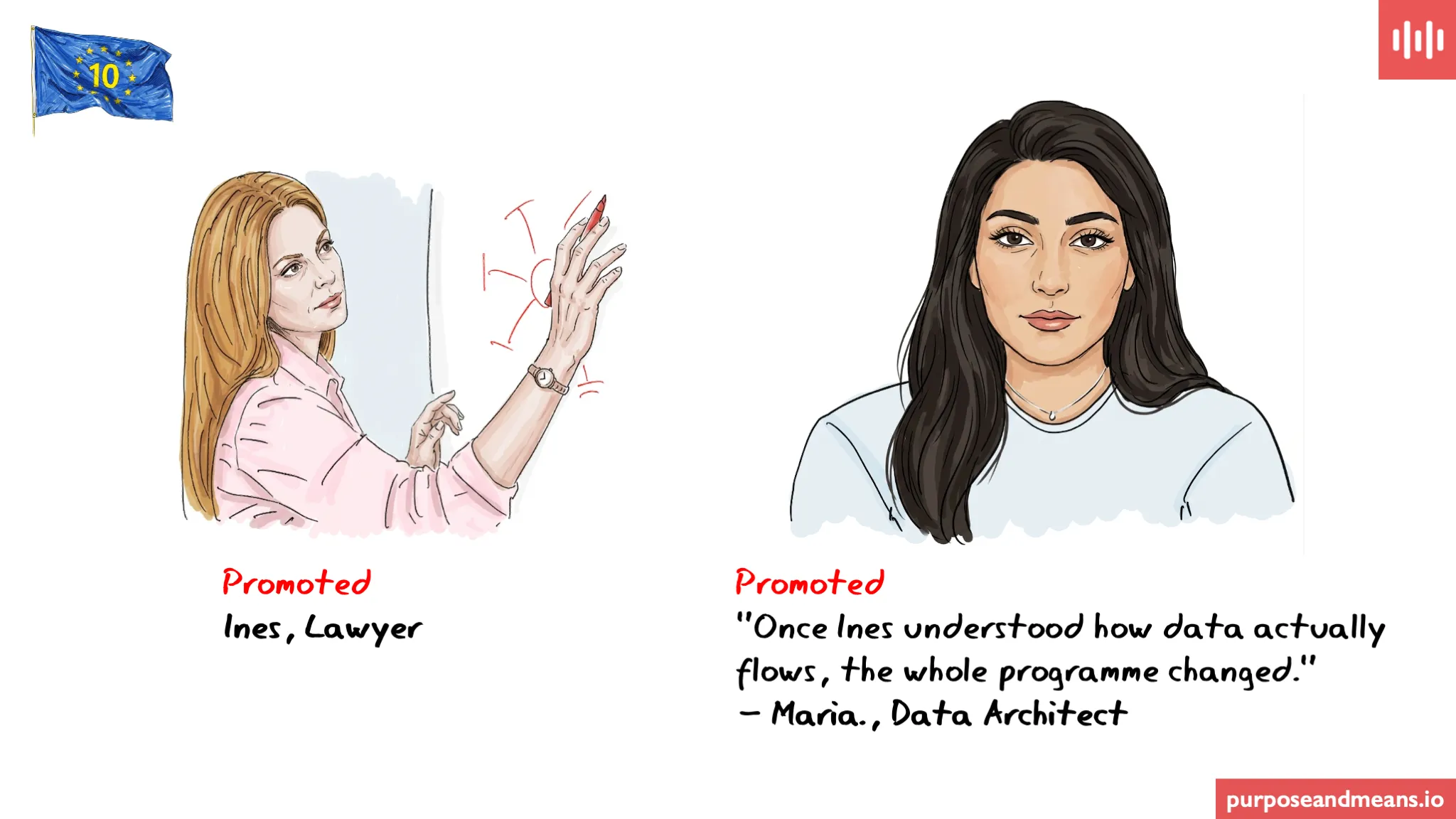

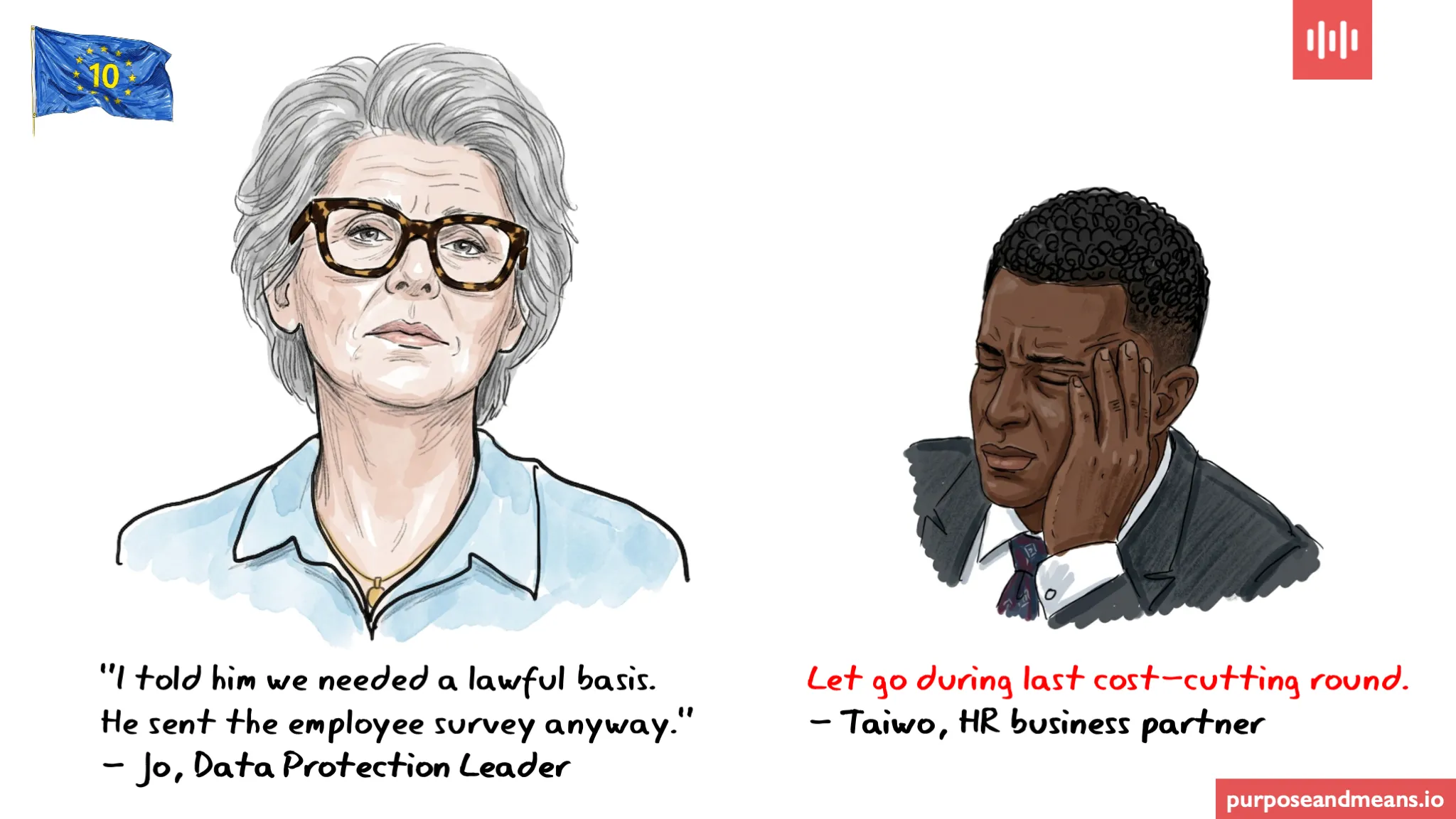

Claire was held accountable, not Sam. Claire — because she had signed off the feature as “compliant” without verifying that her recommendations had been implemented.

She was let go within a couple of months of the inquiry outcome. Sam still works there.

The structural failure #

Obviously, this was not a malicious failure. It was a structural one, and this kind of scenario is one I have encountered on a few occasions.

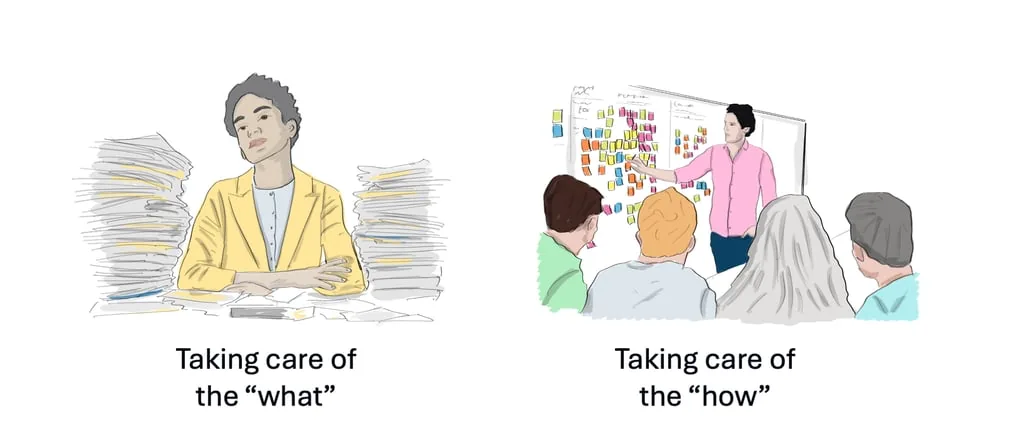

Claire treated data protection by design as a legal exercise — a document that, once produced, discharged her obligation. She had never translated her DPIA recommendations into engineering acceptance criteria. She had never participated in a sprint review. She had never asked Sam what it would actually take to implement pseudonymisation in their data model.

Article 25 is unambiguous. Data protection by design and by default requires that appropriate technical measures are implemented into the processing itself. The DPIA is the beginning of a conversation that has to happen in the various departments in the places where the product actually gets designed and built. Claire never had those conversations.

If you are a Data Protection Leader reading this #

Could you translate your last DPIA into a set of engineering acceptance criteria? Could you explain to a software engineer why pseudonymisation matters at the data model level? Have you ever sat in a sprint review?

If the answer is no to all three, you are not alone. But you are exposed. And so is your company.

The bridge between legal and engineering is not someone else’s job to build. It is yours.

GDPR turns ten - this is a fictitious story part of a series of blog posts reminding us that data protection is not just an issue for the legal team. See Why the backstory of GDPR matters to read about about some of the key dates and events.

Purpose and Means works with organisations on data protection strategy, governance, education and training — going beyond the legal text to focus on how things actually get done. If you’d like to discuss what this means for your company, book a call or explore our services.