Beyond Legal #25: The CISO who thought data protection was a subset of security

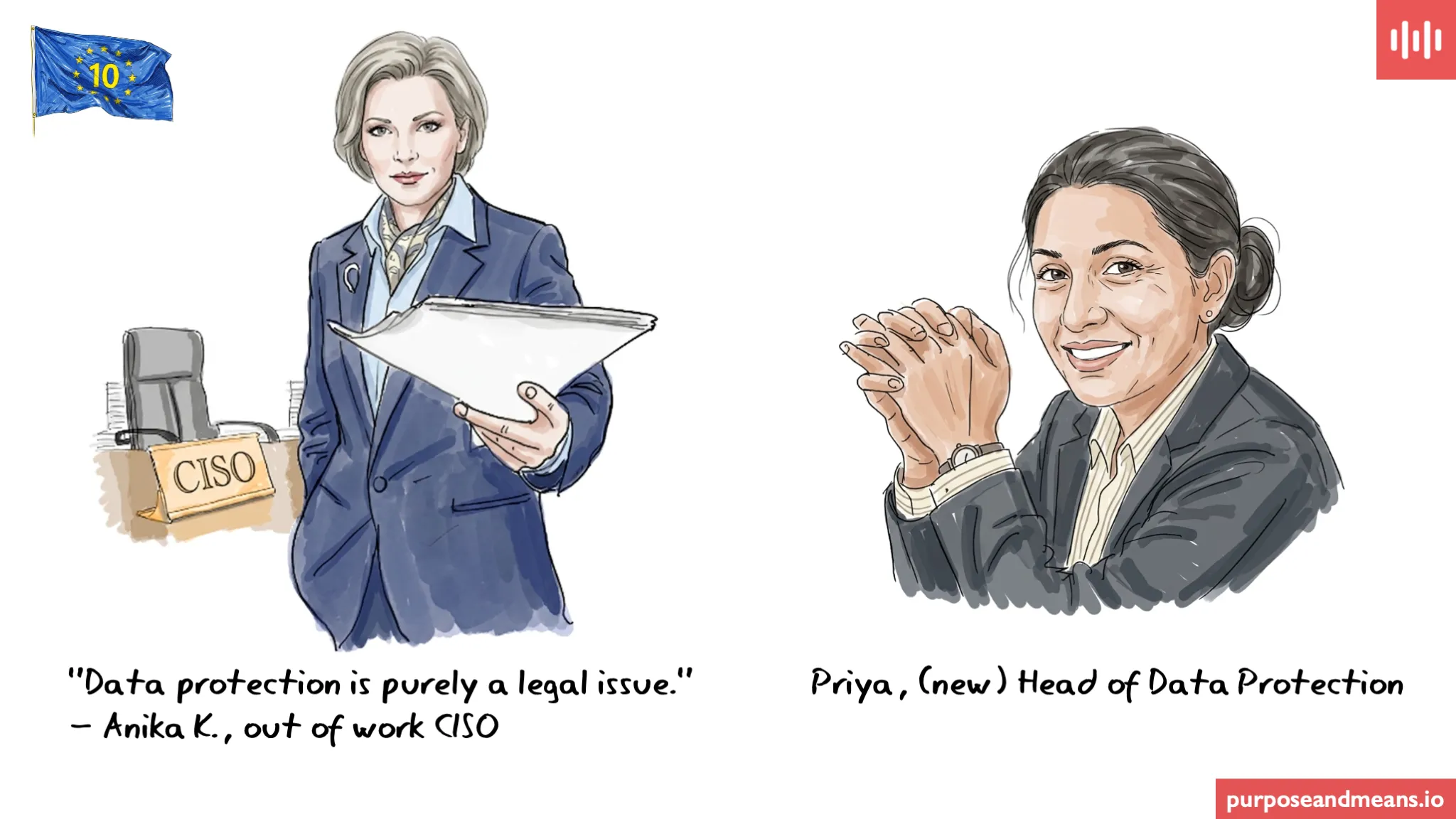

Data protection is purely a legal issue. — Anika K., out of work CISO, 2026

I have heard a version of this in a few companies I have worked with over the years. Sometimes it comes from legal, treating data protection as a compliance task. More often lately, it comes from a security team that has absorbed the data protection function and genuinely believes the two disciplines are the same thing. They are not. And when companies are structured as if they are, the consequences tend to be expensive, and soul destroying for the data protection colleagues.

Anika’s story #

Anika is a CISO with a strong track record — fifteen years in information security, a respected certification portfolio, a familiar face on the speaker circuit and a reputation for running tight security operations. When she joined a mid-sized financial services company, she inherited a structure that felt, to her, entirely sensible: the data protection function reported into her team. Security owned the data protection programme.

Her logic was not unreasonable on its face. Data protection requires secure systems. She ran secure systems. The two belonged together.

The Data Protection Leader — call her Priya — had a different view, and the tension between them was never fully resolved. When the company began processing customer behavioural data for a new marketing analytics platform, Priya wanted to establish the lawful basis, conduct a Data Protection Impact Assessment, and assess whether the processing was compatible with the purposes for which the data had originally been collected. Anika’s team cleared it from a security perspective. The data was encrypted in transit. Access controls were in place. The platform had been penetration tested.

In Anika’s framework, that was the job done.

Is data protection the same as information security under the GDPR? #

No, and this distinction is one of the most consequential governance errors a company can make. You can have strong information security and still violate the GDPR. Security is a necessary condition for data protection, but it is not a sufficient one. A technically secure system can still be processing personal data without a valid lawful basis under Article 6, incompatibly with the purposes for which the data was originally collected, beyond the retention periods that Article 5 permits, without the transparency Articles 13 and 14 requires, and without the DPIA that Article 35 mandates for high-risk processing. The lock on the door tells you nothing about whether what is inside the room is lawful. On the other hand, you cannot have data protection without security — Article 32 requires appropriate technical and organisational measures as a baseline. The relationship is one-directional: security is a floor, not a ceiling.

The deeper issue is the security bias in how data itself gets framed. Security culture thinks in terms of data at rest and data in transit — the data you encrypt, lock down, and protect behind access controls. It does not naturally extend to data that are shared, uploaded to platforms, already publicly visible, or held in open records. And yet the GDPR covers all of it. Personal data that a data subject has posted on social media are still personal data with all the same obligations. Names, addresses, and identifiable information in public records are still personal data. Data scraped from a website are still personal data. The fact that data are publicly available is not a lawful basis under Article 6. It is not even a mitigating factor. A CISO operating only with a lock-and-key model is working with an approach that covers half the scope — and the company is exposed everywhere this approach is implemented.

When Priya raised the DPIA requirement for the analytics platform — which was supplementing first-party data with information pulled from public sources, social media profiles, and professional directories — Anika closed the conversation down. A DPIA was a compliance document. Security had already assessed the risk. The project launched.

What the incident revealed #

When the Supervisory Authority asked to see the lawful basis for enrichment processing using publicly sourced data, the completed DPIA, and the updated records of processing activities covering the new data flows, the company had none of it. Priya had not been included in the decision. Anika had cleared it from a security standpoint — which was, in the regulator’s view, entirely beside the point.

Priya retained her role and was restructured out from under the security function. Anika did not survive the regulatory investigation.

What does enforcement look like when a security-first approach fails data protection? #

Two cases from 2024 show precisely where the security approach fails — and what regulators find when they look behind it.

In April 2024, the Czech data protection authority fined Avast Software €13.9 million — a record fine for the Czech Republic — for unlawfully transferring the browsing data of approximately 100 million users to its subsidiary Jumpshot Inc. Avast argued the data had been fully anonymised and was therefore outside the scope of the GDPR. The authority found it had not been anonymised at all — it had been pseudonymised, tied to a unique identifier, and was re-identifiable. The Czech DPA’s observation gets to the heart of the security-as-data-protection assumption: Avast was one of the foremost cybersecurity companies in the world, offering data and privacy protection tools to the public. Its users could not have expected that this company in particular would transfer their personal data. Exceptional security credentials did not translate into data protection compliance. They were, in this case, actively at odds with it.

In February 2024, Italy’s Garante fined Enel Energia €79.1 million — its largest fine ever — after an investigation found that the company’s customer management systems had serious security shortcomings allowing unauthorised agents to access and process personal data for years. Credentials to the platform could be used on multiple devices simultaneously. Unauthorised agencies exploited this to conduct unlawful telemarketing using illicitly acquired customer data. The controls that existed on paper did not function in practice. The finding was not simply that the systems were insecure — it was that the processing of personal data through those systems was unlawful, and that Enel as controller was accountable regardless of the fact that the direct actors were third parties. A security review of the platform existed. A data protection assessment of what was actually happening to personal data within it did not.

In both cases, the companies had security functions. Neither lacked technical capability. What they lacked was a data protection function operating independently of the security framework.

What does Article 32 of the GDPR actually require from a CISO? #

Article 32 of the GDPR requires controllers and processors to implement technical and organisational measures appropriate to the risk to the rights and freedoms of natural persons — taking into account the state of the art, implementation costs, and the nature, scope, context, and purposes of the processing. This is a data protection requirement with a security dimension, not a security requirement that compliance signs off on. The risk Article 32 asks companies to assess is not the risk to systems. It is the risk to people. That assessment requires the CISO and the Data Protection Leader to be working together, from the same framework, on the same question — with neither function subordinated to the other. Structures that place data protection inside the security team do not produce that outcome. They produce companies that can demonstrate they locked the door, but cannot explain why they opened it in the first place.

The challenge for today: Draw the organisational chart for your data protection function. Does it report into security, legal, or independently? Now ask whether that structure would survive scrutiny from a Supervisory Authority — not on the security measures it produces, but on the data protection decisions it enables.

For more on how technical decisions carry data protection consequences, see Beyond Legal #21 on data protection by design, and Beyond Legal #22 on what happens when architecture and data protection operate in separate silos.

Article references: Article 5(1)(a) (lawfulness, fairness, transparency), Article 5(1)(b) (purpose limitation), 5(1)(e) (storage limitation), Article 5(1)(f) (integrity and confidentiality), Article 6 (lawful basis), Article 13 (transparency obligations), Article 25 (data protection by design and by default), Article 30 (records of processing activities), Article 32 (security of processing), Article 35 (data protection impact assessment).

Series: This is post 25 in the Beyond Legal series — 40 roles, 20 days, real consequences. The story about Anika and Priya is fictitious, the Czech and Italian cases are real.

Purpose and Means works with organisations on data protection strategy, governance, and compliance — going beyond the legal text to focus on how things actually get done. If you’d like to discuss what this means for your organisation, book a call or explore our services.