Beyond Legal #34: The data scientist who understood what the model was actually doing

She understood what the model was actually doing with personal data. That is rare. — Elias J., Data Scientist

Quite a few data protection assessments of machine learning models are conducted at the wrong level of abstraction. The Data Protection Leader reviews the stated purpose of the model and the category of data used as inputs. The data scientist knows something the assessment never captures: what the model is actually inferring, what proxies it is using for protected characteristics, and what kinds of outputs are being produced that the product description does not mention. The gap between those two descriptions is where the GDPR exposure lives.

Elias’s story #

Elias is a senior data scientist at a pan-European consumer finance company. He builds and maintains the models that assess creditworthiness, predict churn, and personalise product offers. The models are sophisticated, the data pipelines are complex, and the outputs — decisions that directly affect individual data subjects’ access to credit — are significant in ways that go well beyond the marketing framing.

He had never worked with a Data Protection Leader on a model assessment before he met the one who changed that — meet Ingrid.

Ingrid had come into the company as part of a governance strengthening exercise following a Supervisory Authority inquiry at a competitor. Her first action was to map the company’s processing activities. Her second was to identify every instance where the company was using automated processing to make or inform decisions about data subjects. Her third was to sit down with Elias and ask him to explain, in plain terms, what each model was actually doing.

That conversation took several hours. It changed the entire assessment.

What Elias told her that the documentation had not #

The credit scoring model, documented as a risk-assessment tool using financial history data, was in practice using location data as a proxy — and the locations it was downweighting were correlated with ethnicity in ways the model had learned from historical approval patterns. The model was technically accurate in its predictions. It was also potentially discriminatory in its outputs, in a way that no documentation review would have revealed.

The churn prediction model, documented as a retention tool, was generating outputs that were then used to restrict access to certain promotional offers — a decision that materially affected data subjects without any human review, any notification to the data subject that an automated decision had been made, or any mechanism for the data subject to contest the outcome. This was a textbook Article 22 violation: significant decisions affecting data subjects, made solely on the basis of automated processing, without the safeguards the GDPR requires.

Ingrid had the technical vocabulary to understand what Elias was describing and the legal framework to explain why it mattered. Elias had never had that conversation before, because nobody from the data protection team had ever asked him what the models were doing rather than what they were supposed to be doing.

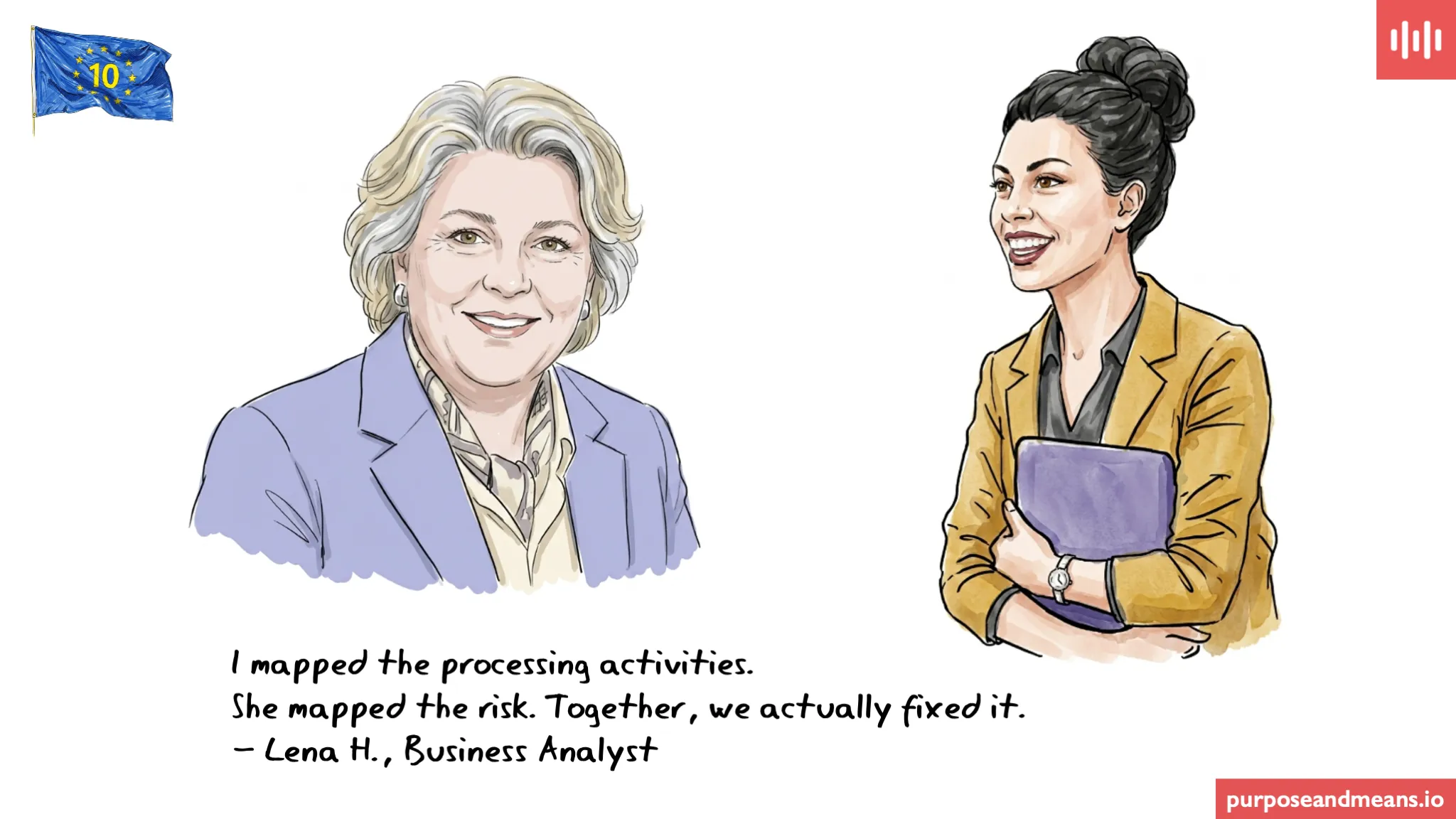

Together they rebuilt the DPIA framework for all automated processing. The credit model was retrained. The churn model was redesigned with a human review step and a mechanism for data subjects to request an explanation and contest decisions. Ingrid became the company’s strategic lead for data governance. Elias was cited in her board presentation as a critical contributor to the remediation.

What does Article 22 of the GDPR require for automated decision-making? #

Article 22 of the GDPR gives data subjects the right not to be subject to a decision based solely on automated processing — including profiling — which produces legal or similarly significant effects. Where such processing takes place, it is only permitted in three circumstances: where it is necessary for the performance of a contract, where it is authorised by law, or where the data subject has given explicit consent. In all cases, the controller must implement suitable safeguards — at minimum, the right for the data subject to obtain human intervention, to express their point of view, and to contest the decision. The controller must also proactively inform data subjects of the existence of automated decision-making and provide meaningful information about the logic involved. Two enforcement decisions show how seriously supervisory authorities treat this. In 2025, the Hamburg Data Protection Authority fined a financial services provider approximately €492.000 for automatically rejecting credit card applications using algorithmic scoring without providing applicants with meaningful information about the logic of the decision and without offering adequate human review under Article 22(3). The regulator’s finding was specific: the existence of an automated system is not itself the violation — the absence of the safeguards is.

In October 2024, the Irish Data Protection Commission fined LinkedIn €310 million because its use of personal data for behavioural profiling — algorithmically inferring user characteristics from interaction signals to drive advertising targeting — was conducted without a valid lawful basis under Article 6 GDPR and without the transparency that data subjects were entitled to under Articles 13 and 14 about how their personal data was being used. The DPC found that consent obtained from users was not freely given, sufficiently informed, specific, or unambiguous; that contractual necessity could not justify the processing; and that LinkedIn’s legitimate interests were overridden by users’ rights. The case is a reminder that profiling at scale requires both a solid lawful basis and genuine transparency — neither of which can be assessed without understanding what the models are actually doing with personal data.

What does a Data Scientist need to understand about GDPR obligations on automated processing? #

A data scientist building models that produce outputs affecting data subjects has direct relevance to three GDPR obligations that are usually treated as data protection team responsibilities. First, Article 35 requires a DPIA before deploying any processing that involves systematic evaluation of personal aspects based on automated processing, where this produces legal or similarly significant effects — which includes most credit, eligibility, and personalisation models. Second, Article 22 requires that where automated decisions with significant effects are made, safeguards are built into the processing architecture, not added as a compliance layer after deployment. Third, Article 13 requires that data subjects are proactively told that automated decision-making is taking place and given meaningful information about the logic involved. None of those obligations can be met without the data scientist’s involvement. A compliance team that reviews a model at the level of its documented purpose is not assessing the model. A data scientist who can explain what the model is actually doing is the starting point for a real assessment.

The challenge for today: Identify the three models or automated processes in your company that produce the most significant outputs affecting data subjects. For each one, ask the data scientist or analyst who built it two questions: what is the model actually inferring about individuals, and are there any proxy variables in the inputs that could correlate with a protected characteristic? If you have not had that conversation, you have not yet assessed the risk.

For more on how technical expertise connects to data protection accountability, see Beyond Legal #21 on data protection by design in engineering, and Beyond Legal #30 on the gap between documented processing and operational reality.

Article references: Article 5(1)(a) (fairness), Article 5(2) (accountability), Article 13(2)(f) (automated decision-making transparency), Article 22 (automated individual decision-making), Article 35 (data protection impact assessment), Article 83(4) and 83(5) (administrative fines).

Series: This is post i4 in the ‘GDPR at 10’ series — 20 roles, 20 days, real consequences (and ficticious people and scenarios).