Beyond legal #20: Getting real with harms

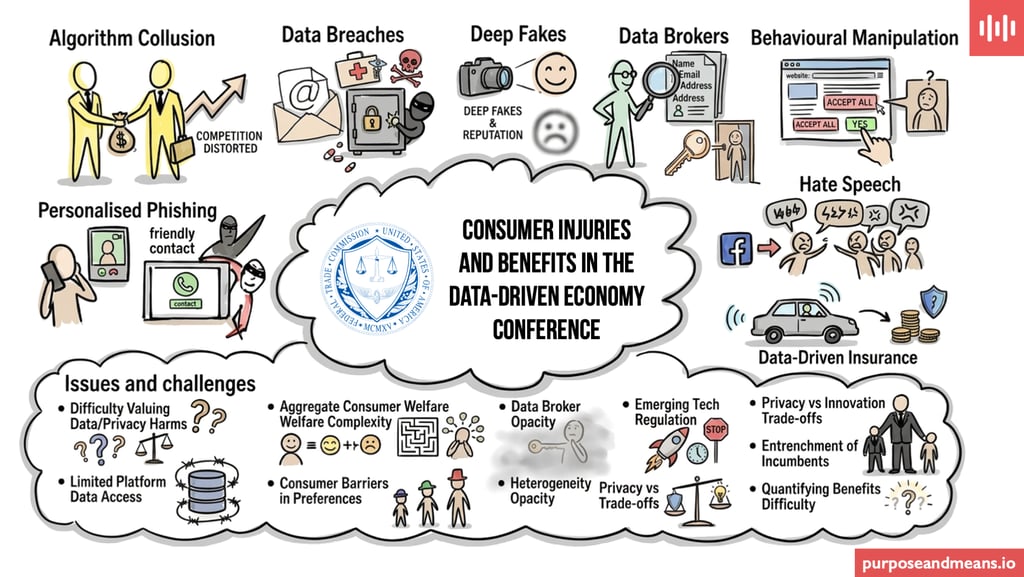

Notes from the FTC’s latest conference about informational harms highlighting why data protection is a complex economic, societal, and product design issue that is far too critical to be left solely to the legal department.

EDUCATION AND TRAININGDATA PROTECTION LEADERSHIPPERSONAL DATA BREACHGOVERNANCE

Tim Clements

3/9/20263 min read

I have various education and training courses covering harms resulting from the processing of personal data and at the Federal Trade Commission's (FTC) last informational harms conference in 2017 I gleaned so many horrific real life cases to underpin the messages in my courses.

When the FTC recently shared the video recording of their latest Consumer Injuries and Benefits in the Data-Driven Economy conference that was held last month, I did set aside some time to watch it over several sittings - it is over 7 hours long! Be aware that the recording includes an hour-long lunch break (i.e. silence) - not sure why they didn't just edit that out.

What stood out for me this time around was very little discussion about regulatory frameworks, GDPR fines, or the exact phrasing of privacy notices or data protection policies.

From my perspective it was more around economics, behavioural psychology, cybersecurity, and sociology and reinforced my belief that data protection these days is about how we design products, structure markets, and protect society. In other words, way, way more than ticking legal and compliance tick boxes. The recording is available on Youtube here:

If you still believe data protection and privacy are primarily topics for your legal and compliance departments then watch the video, or read my brief synopsis below and share it with your C-suite colleagues, product teams, technologists and economists (if you have them).

Data protection is a also competition issue

When we leave data protection to the legal department, they focus on articles and recitals which are of course very important but if you bring in economists, they see market distortion.

Algorithm collusion: The FTC highlighted how sharing granular consumer data allows companies to engage in "algorithmic collusion." Prices become extremely competitive and consumers face hyper-effective price discrimination.

Compliance monopoly: Common mechanisms like cookie banners and consent interfaces can harm competition. These types of mechanism often annoy consumers and add friction that entrenches incumbent platforms. Dominant bigtech leverage control over ecosystems in order to maintain their powerful positions in the market, which is tough on new entrants looking to compete.

Real-world harms are physical, emotional, and societal

Many data protection professionals calculate personal data breaches from the cost of notifying individuals and paying potential fines but the stories shared during the conference were real life and affecting people lives:

Stories about medical identity theft, corrupting health records and triggers ransomware attacks that literally disrupt hospital operations and patient safety.

Data brokers scraping and selling public records (like property transactions and marriage registrations) directly facilitate real-world harms, including stalking and domestic violence.

There were conversations that touched on the algorithmic amplification of hate speech (e.g. Facebook’s role in Myanmar). This is severe societal-level harm, stretching far beyond the scope of data subject rights.

Product innovation and trust

Innovation in the data economy involves a fragile balance of behavioural science and product design.

Usage-based insurance programmes (i.e., monitoring driving habits) can lower premiums and improve road safety, but they face massive consumer resistance due to privacy concerns. This was compared to the early political resistance to when seatbelt laws were first introduced.

We often associate personalisation with targeted ads and customised interfaces but things are much more sophisticated these days with personalised phishing and deep fakes (like fake video calls from trusted contacts) that cause huge emotional and financial ruin, as well as lost employment opportunities.

Behavioural manipulation due to continued informational asymmetry. Dark patterns and overwhelming data protection and privacy preferences mean that genuine "informed consent" is effectively impossible for the average person.

Challenges and issues

The conference identified several barriers to addressing these issues, and none of them can be solved by bringing in teams of lawyers or re-writing the privacy policy:

Quantifying harm is difficult: Clear definitions for how to quantify multi-dimensional, context-dependent privacy harms are lacking. How do you put a monetary value on the emotional toll of a deep fake or the loss of autonomy?

Data brokers: The data broker ecosystem is a black box because limited empirical data exists, and we cannot regulate or design against what we cannot see.

The pacing of change: Rapid technological innovation (especially in AI) increasingly outpaces existing regulatory frameworks and researchers face huge challenges in acquiring actual data from platforms in order to conduct studies.

It is clear that while the "data-driven economy" creates many benefits for various groups of stakeholders, it also generates complex, systemic injuries and if your company assigns data protection solely to the legal department, then you will lack the needed competences to navigate and address this complexity. Companies need economists to understand data markets, sociologists to understand societal impacts, behavioural psychologists to design ethical choices, and technologists to secure our identities.

I think it is time to look beyond the law.

Purpose and Means is a niche data protection and GRC consultancy based in Copenhagen but operating globally. We work with global corporations providing services with flexibility and a slightly different approach to the larger consultancies. We have the agility to adjust and change as your plans change. Take a look at some of our client cases to get sense of what we do.

We are experienced in working with data protection leaders and their teams in addressing troubled projects, programmes and functions. Feel free to book a call if you wish to hear more about how we can help you improve your work.

Purpose and Means

Purpose and Means believes the business world is better when companies establish trust through impeccable governance.

BaseD in Copenhagen, OPerating Globally

tc@purposeandmeans.io

© 2026. All rights reserved.