Beyond legal # 19: The Chrome-ification of education and the algorithmic classroom

Triggered by the recent Danish regulatory ruling against the use of Google in schools, “Googleization of education” is not just a legal compliance issue, but a key societal challenge that commodifies children’s data, threatens their rights and freedoms, and erodes traditional teacher autonomy in favour of algorithmic standardisation.

At the start of this month, Datatilsynet, (the Danish Data Protection Supervisory Authority) delivered a decision criticising 51 municipalities for their use of Google products in the Danish public school system. Datatilsynet highlighted insufficient data protection measures and warned that the widespread use of Google’s suite, as well as its reliance on sub-processors outside the EU, violates the GDPR. Their press release (in Danish) can be read here.

If you look at the case purely through a compliance lens, you see a story about processing activities, international data transfers, and sub-processor agreements. But as I often mention in this blog post series, we must look Beyond Legal. Data protection is not primarily a topic for legal professionals because it has huge societal consequences - both positive and negative, and from my perspective, this case is more about the fundamental transformation of our education system, the digital rights of our children, and the erosion of the teaching profession itself.

The art of teaching Teaching runs deep in my blood because over the past five decades, my brother, sister, mother, and grandfather have all dedicated their lives to the classroom as teachers and lecturers. I have huge respect for the profession, and it really saddens me that the traditional role of the teacher: the mentor, the nurturer, the inspiration for many, is potentially being compromised by technological interference.

This ties into a reflection I shared recently on LinkedIn regarding Sir Ken Robinson’s classic TED Talk about how “schools kill creativity.” It’s 20 years ago since the late Sir Ken famously warned against the industrial, factory-model of education that processes students rather than nurturing their individual and unique talents. And through some follow-up exchanges within my network, and some ongoing studying I’ve been doing this month about the platform society I just wanted to get my thoughts down in this post before the month ends.

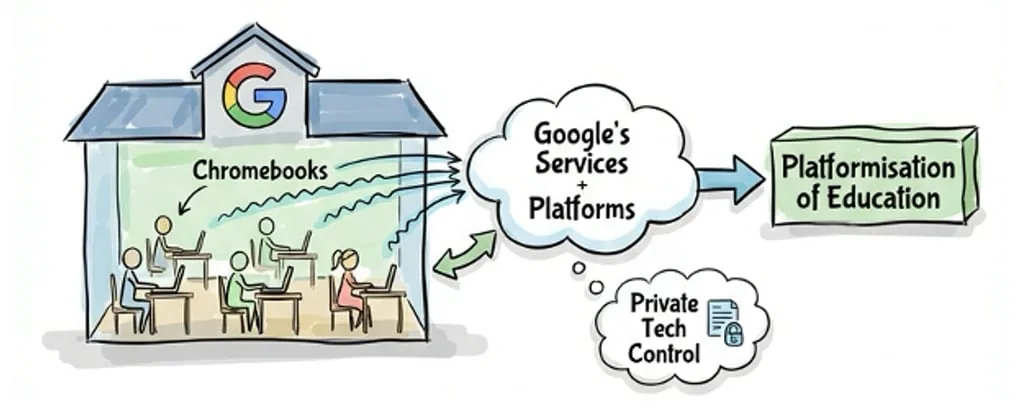

The Googleization of education Much has been written about this topic by others. People like Sonia Livingstone and Professor José van Dijlk, and of course all the work and awareness Jesper Graugaard (“Father to the Danish chromebook case”) has brought to the widespread adoption of Google’s digital services (like Google Classroom and Google Analytics) and hardware (such as Chromebooks) in educational settings. It transforms classrooms into environments completely reliant on a single tech giant’s vertically integrated ecosystem.

As I mentioned in Beyond legal #17: Planting other trees in the forest, we can use van Dijck’s metaphor of the “Platformisation Tree” to understand this. Schools’ entire educational infrastructure is becoming entangled in the deep, opaque roots of bigtech which is part of a broader platformisation of education, where private tech companies, not educators, are controlling educational tools, data, and administration.

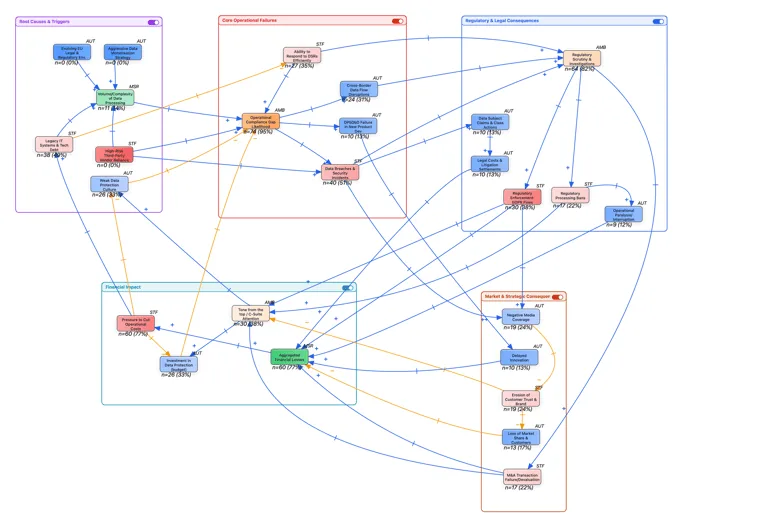

The loss of autonomy There’s much to be concerned about and when we synthesise the work of Livingstone, van Dijlk, Graugaard (among others) with the current reality of the classroom, this is way beyond GDPR violations:

Data protection & commercialisation: In Beyond legal #18: Data separation as a design strategy, I wrote about the danger of the ‘Oligopticon’ how distinct, partial data gazes merge into a 360-degree Panopticon. A child’s learning progress is one such partial gaze and when lots of behavioural data is collected via a singular Google ID within a unified ecosystem, the walls between data silos collapse. Children, who are inherently vulnerable, become targets for data exploitation, and their education is turned into an economic resource.

Loss of teacher autonomy: This is what hurts the most when I think of my family. We are seeing a fundamental change from teacher-led pedagogy to algorithm-driven learning and analytics. Algorithms dictate the pace, standardising and centralising curricula based on platform logic rather than human intuition.

Shift in educational values: Education should be about enabling critical citizenship and cultural growth. Unfortunately what we are seeing these days is platformisation shifting the focus towards purely measurable skills and data-driven learning outcomes.

Surveillance and continuous monitoring: The use of predictive analytics to track student performance creates an environment of constant surveillance. We risk trapping children in “educational filter bubbles,” where content is algorithmically personalised but severely limited, stifling the very creativity Sir Ken championed in his Ted Talk back in 2006.

Educational data as a valuable asset What happens to childrens’ data when they leave school? Educational data is increasingly integrated into social profiles, used for future labour market selection, recruitment, and data brokerage. Premium certification services are emerging around this data, raising huge ethical questions about children’s rights.

What can we learn from the rise of MOOCs and platforms like Coursera? While offering free content, they monetise certificates and unbundle traditional university services. This model reduces teacher autonomy and risks the privatisation of knowledge validation, pushing a global standardisation that bypasses national accreditation entirely.

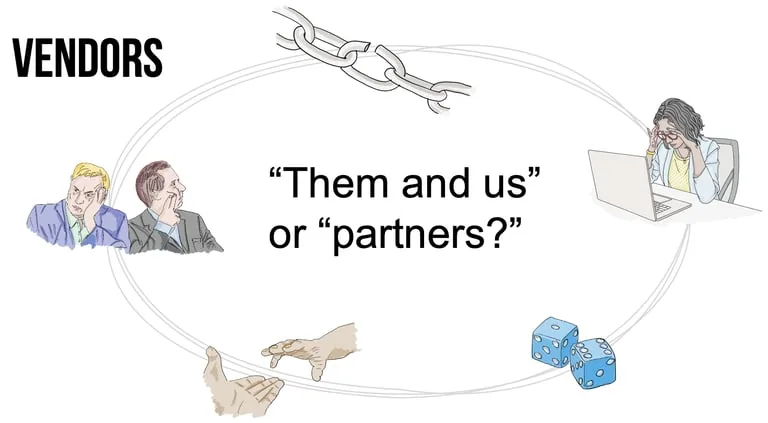

Are there better alternatives? The Datatilsynet ruling highlights the conflict between public institutions and bigtech monopolies. As I mentioned in Beyond legal #17, the power imbalance in Data Processing Agreements (DPAs) often leaves municipalities with a “take it or leave it” ultimatum.

If we want to protect the United Nations Convention on the Rights of the Child (UNCRC) and fully realise the benefit of the GDPR, we need to recognise that legal enforcement only patches the gaps. We need stronger governance and better alternatives that prioritise the interests of the children and their teachers. A few thoughts here (also taken from the work of the people mentioned earlier):

Architectural data separation: Recalling Jaap-Henk Hoepman’s strategies from Beyond legal #18, we must proactively design for data separation. Educational data must be architecturally decoupled from commercial ad-tech ecosystems to preserve contextual integrity.

Open-source & FAIR principles: We should promote the development of open-source and open-data educational platforms that follow FAIR principles (Findable, Accessible, Interoperable, Reusable).

Blended learning solutions: We must re-centre the classroom around human interaction, where digital tools assist rather than direct, this will help restore teacher autonomy.

We need to preserve public values in education, equality of access, democratic governance, and the freedom for children to learn and make mistakes without being permanently profiled. The classroom should be a sanctuary for creative growth and not just another branch on the platformisation tree.